Our institute member Assoc.Prof. Gülsen Taskın Kaya’s TUBITAK 1001 project named “A Novel Explainable AI Method based on Sensitivity Analysis and its Application to Deep Learning Models for Remote Sensing Images” has been funded between 2022-2024.

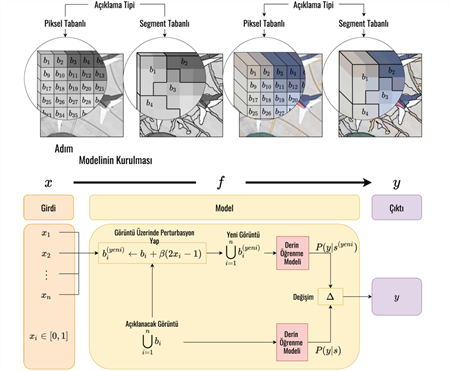

Deep learning methods are advantageous in generating automatic models with high accuracy, but as the number of hidden layers increases, the resulting learning models have an extremely complex internal structure. As a result, these models do not provide an explicit model, resulting in an opaque model with no explanation for why and how the decision was made by the learning model. Especially in remote sensing problems, it is crucial for end users to understand why and how the decisions made by deep learning models were made, in addition to the high accuracy, in order to analyze predictions and monitor the physical consistency between the model and data. This project aims to address these problems by provide a sensitivity-based, model-agnostic explainable AI method for the scene classification problem in remote sensing.